This guide draws on established finance and enrollment analytics frameworks to help admissions leaders build a defensible ROI case for AI investments.

AI is showing up everywhere in enrollment: chatbots answering inquiries at midnight, yield models flagging students who are likely to melt, and automated campaigns pushing deposits at the right moment. But when your CFO or provost asks, “What did we actually get from this?” most enrollment teams don’t have a clear answer.

If you’ve ever found yourself asking “How do I measure ROI for AI initiatives in enrollment?” and actually mean it in a way that holds up to scrutiny, you’re not alone.

That’s the gap this guide closes. This is your practical, step-by-step breakdown of how to measure ROI for AI initiatives in enrollment — whether you’re piloting your first AI tool or scaling one that’s already live. The framework is finance-grade, defensible, and one you can actually take into a leadership meeting.

What counts as AI in enrollment

Before you can measure ROI, you need to agree on what you’re measuring. AI in enrollment typically includes:

- Conversational AI and chatbots — 24/7 inquiry handling, application status updates, FAQ deflection from your admissions staff.

- Lead scoring and prioritization — Ranking prospects by likelihood to apply or enroll, so counselors can focus on the right students first.

- Yield modeling — Predicting which admitted students are likely to deposit or melt, so you can intervene before it’s too late.

- Behavioral nudging and campaign automation — Triggering personalized outreach based on a student’s actions (or inactions) in the funnel.

- Document and process automation — Routing applications, flagging incomplete files, automating decision letters.

Each use case carries different costs, different benefit profiles, and different measurement timelines. Keep them distinct in your model.

Start with a baseline and define success clearly

ROI is meaningless without a before. Before you launch any AI tool, lock in your baseline metrics for each stage of the enrollment funnel

Ruffalo Noel Levitz publishes annual enrollment funnel benchmarks through their E-Expectations Tracker and Student Retention Indicators Report — these are the most widely used external benchmarks for contextualizing your institution’s conversion rates against peer institutions.

Your metrics should include:

- Inquiry-to-application conversion rate

- Application completion rate

- Admit-to-deposit yield

- Melt rate (deposits lost before enrollment)

- Time-to-response for inquiries

- Advisor caseload and average contacts per student

- Cost per enrollment

Document these by cohort, term, and student segment (domestic vs. international, transfer vs. freshman, etc.). The more granular your baseline, the cleaner your attribution later.

Set success thresholds before you go live

Also define what success looks like before you go live. A +1.5 percentage point yield improvement? A 20% reduction in inquiry response time? Cutting advisor time on routine tasks by 30%? Put a number on it. If your vendor can’t tell you what realistic outcomes look like, that’s a red flag.

How to measure ROI for AI initiatives in enrollment: Key steps

Measuring ROI isn’t a one-time event at the end of a fiscal year. It’s a structured process that runs parallel to your AI deployment. Here’s how to approach it step by step.

Step 1: Define your measurement unit

Decide whether you’re measuring at the campaign level, cohort level, or full-funnel level. Cohort-level tracking (e.g., Fall 2025 freshman applicants exposed to AI chatbot vs. not) tends to produce the cleanest comparisons.

Step 2: Set up exposure tracking

Every student who interacts with an AI system should be flagged in your CRM. Track the date, channel, AI tool used, and funnel stage at the time of interaction. Without this, attribution is guesswork.

Step 3: Choose an attribution method

This is where most teams get sloppy. The options, in order of rigor:

- Randomized A/B testing — Randomly assign a portion of your prospect pool to receive AI-driven engagement; compare outcomes. Gold standard, but requires volume and planning.

- Holdout groups — Similar to A/B, but you withhold the AI treatment from a control group rather than varying the message. Useful for yield models.

- Difference-in-differences (Diff-in-Diff) — Compare outcome changes for the treated group vs. a comparable untreated group across two time periods. Works well if you introduced AI mid-cycle.

- Uplift modeling — Uses ML to estimate the incremental effect of an intervention per student. Requires data science capability, but produces the most actionable outputs.

If you’re a smaller institution without the infrastructure for formal experiments, start with a simple holdout: pull a 10–15% random sample of your admitted students out of your AI yield campaign, measure their deposit rate, and compare it to the treated group.

Step 4: Measure at the right cadence

Don’t wait until the cycle-end. Set monthly check-ins for leading indicators (response time, application completion rate) and quarterly reviews for lagging indicators (yield, melt, retention).

Step 5: Build your financial model

Once you have outcome data, translate it into dollars. More on this below.

Helpful resources:

How AI Improves Student Counselor Performance: 5 Use Cases

AI Agents In Admissions: The Smart Upgrade For Higher Ed

Key metrics to track

Tracking the right metrics is what separates a credible ROI story from a gut-feel claim. Group your metrics into three buckets:

Funnel performance metrics

- Inquiry-to-application conversion rate

- Application completion rate

- Admit-to-deposit yield

- Deposit-to-enrollment (melt rate)

- Term-over-term retention rate

- Show rate for orientation/first day

Also read: Enrollment & Admissions Conversion Rates 101 | LeadSquared

Operational efficiency metrics

- Average inquiry response time

- Advisor contacts per student

- Percentage of inquiries deflected to self-service

- Staff hours on routine vs. high-value tasks

- Cost per enrollment

Financial and risk metrics

- Net tuition revenue per enrolled student (after discounting)

- Student lifetime value (LTV)

- Cost to acquire a student (CAC)

- LTV-to-CAC ratio

- FERPA/compliance incident rate (risk reduction)

These metrics feed directly into your financial model. If you’re not tracking most of them already, fixing your data infrastructure is a prerequisite to meaningful AI ROI measurement.

Building the financial model

There are three sources of value to capture: revenue uplift, cost savings, and risk reduction.

Disclaimer: All figures referenced in this section are illustrative. Where industry benchmarks are cited, sources are noted. Always validate against your institution’s own data before presenting to leadership.

Revenue uplift

This is the big number, and it flows from incremental enrollments.

Incremental Revenue = Incremental Enrollments × Net Tuition per Year × Average Years Enrolled (discounted) × Retention Factor

Use net tuition after institutional aid, not sticker price. According to the NACUBO Tuition Discounting Study, institutional aid as a share of tuition revenue has reached record levels — making it critical to model from your actual net tuition figure rather than list price. If your average discount rate is 45% on a $28,000 list price, your net tuition is around $15,400.

Cost savings

Quantify staff time recovered. If your AI chatbot deflects 30% of routine inquiry volume, calculate the hours saved at your staff’s average fully-loaded hourly cost. Include: call center volume reduction, advisor time on status updates, manual follow-up campaigns that are now automated.

Risk reduction

Harder to quantify, but real. If AI flags a FERPA-sensitive data exposure before it becomes an incident, assign a probability-weighted cost: P(incident) × estimated remediation and reputational cost. Use your institution’s historical incident costs or published benchmarks if you don’t have your own.

Total cost of ownership (TCO)

Don’t undercount costs. The full picture includes:

- Software licenses and platform fees

- Cloud compute and infrastructure

- CRM/SIS integration and ETL work

- Data labeling and model training

- Ongoing model monitoring and model ops

- Security, legal, and compliance review

- Change management and staff training

- Internal staff time for prompt/feature engineering and support

Integration and change management costs are consistently higher than institutions expect. Build-in a buffer.

The formula

ROI = Net Benefit / Total Cost

Net Benefit = Revenue Uplift + Cost Savings + Risk Reduction − Ongoing Costs

For multi-year investments, use NPV:

NPV = Σ(Cash Flow in Year t / (1 + discount rate)^t) − Initial Investment

Use your institution’s standard capital project discount rate, typically set by your finance office. If one isn’t established, a rate aligned with your cost of borrowing or bond rate is a reasonable starting point.

Also calculate payback period (how many terms until cumulative benefit exceeds cost) and Internal Rate of Return (IRR) if your finance team uses it for capital comparisons.

Worked example: AI chatbot + yield model

Here’s an end-to-end calculation to make this concrete.

ILLUSTRATIVE EXAMPLE — NOT A PERFORMANCE GUARANTEE

The figures below are hypothetical and designed to demonstrate the model structure.

Your institution’s results will depend on baseline conversion rates, net tuition, AI tool performance, and cost structure.

Always build and validate your own model using your institution’s data.

Setup and assumptions

A mid-size university deploys an AI chatbot for inquiry handling and a yield prediction model for undergraduate admissions. Annual all-in cost: $420,000.

- Prospect pool: 50,000

- Baseline application rate: 18%; AI-driven lift: +3 percentage points → 1,500 incremental applicants

- Baseline admit-to-deposit yield: 22%; AI-driven lift: +1.5 percentage points → ~330 incremental deposited students (after accounting for admit rate)

- Not all deposits enroll: assume 85% show rate → ~280 incremental enrollments

- Net tuition per student per year: $14,000

- Average enrollment duration (discounted at 5%): 3.2 years

- Retention factor: 80% (see IPEDS Data Center for your institution’s Carnegie classification baseline)

The numbers

Revenue uplift: 280 enrollments × $14,000 × 3.2 years × 0.80 retention = $10.05M over the cohort’s lifetime

On an annualized basis (first-year tuition only): 280 × $14,000 = $3.92M

Cost savings: Estimate your fully-loaded cost per handled contact by dividing your contact center’s total annual cost (staff salaries, benefits, overhead, technology) by total annual contact volume. For reference, industry benchmarks in higher ed contact center operations typically range from $8–$18 per contact depending on staffing model and inquiry complexity.

Total annual benefit (Year 1): $3,920,000 + $336,000 = $4,256,000

ROI (Year 1): ($4,256,000 − $420,000) / $420,000 = 913%

Payback period: Well under one cycle.

| Scenario | Yield Lift | Deflection Rate | Year 1 Benefit | ROI |

| Conservative | +0.75 pp | 15% | ~$1.1M | ~162% |

| Base Case | +1.5 pp | 35% | ~$4.3M | ~913% |

| Optimistic | +2.5 pp | 50% | ~$6.8M | ~1,519% |

Governance, bias, and compliance

Measuring ROI is not just about dollars. It’s about making sure your AI is working fairly and legally.

FERPA compliance

Any AI system that accesses student records must be covered by your institution’s data use agreements. Verify that your vendor qualifies as a ‘school official’ under FERPA before granting data access — the Department of Education’s official guidance on this determination is available at FERPA.ed.gov, and requirements have been updated as cloud-based and AI vendor relationships have evolved.

Algorithmic bias

Your yield model may inadvertently penalize students from underrepresented groups if your training data reflects historical inequities. Audit model outputs by race/ethnicity, Pell eligibility, and first-generation status at least once per cycle. If your AI is systematically under-serving certain populations, that’s both an equity problem and a legal risk.

Don’t collect data you don’t need. Define data retention policies before deployment. Log all model versions and prompt changes so you can audit what a student experienced at any given point in the funnel.

Legal note: The guidance in this section reflects general best practices and is not legal advice. FERPA requirements, vendor agreement obligations, and AI governance standards vary by institution type and are subject to regulatory change. Consult your institution’s legal counsel before finalizing vendor contracts, data use agreements, or bias audit protocols.

Tooling and operating model

A good ROI measurement practice needs owners, not just processes.

Who owns what

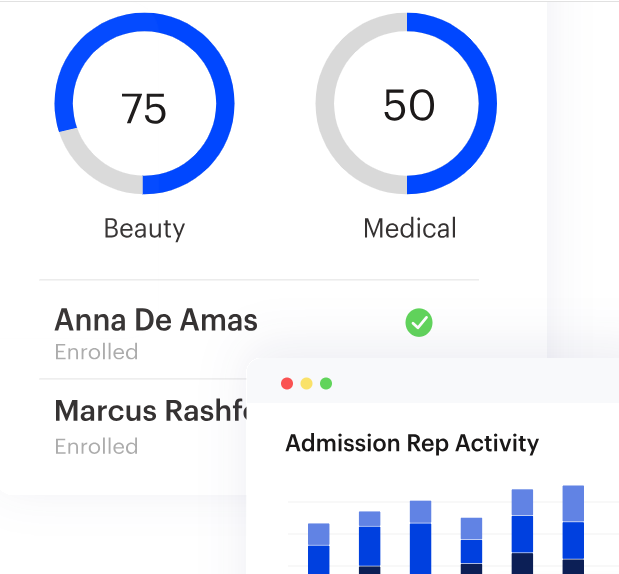

- Enrollment analytics team: funnel metrics, exposure tracking, experiment design

- Finance/budget office: cost accounting, NPV/IRR validation

- IT/data team: data pipelines, CRM integration, model monitoring

- Legal/compliance: FERPA review, vendor agreements, bias audits

Dashboard requirements

Build a live enrollment analytics dashboard that shows funnel performance by cohort, AI-exposed vs. control, updated at least weekly during active recruitment cycles. Static end-of-cycle reports are too late to act on.

Review cadence

Monthly operational reviews (leading indicators), quarterly financial reviews (ROI tracking), annual strategic review (full NPV, model refresh, contract renegotiation decisions).

Common pitfalls and how to avoid them

- Measuring output instead of outcomes. A chatbot handling 10,000 conversations is an output. Incremental enrollments are an outcome. Tie every metric back to the funnel.

- Ignoring the counterfactual. If enrollment went up 5% this year, how much of that was the AI and how much was demographic tailwinds or a competitor closing? You need a control group to answer this.

- Undercounting costs. The license fee is the visible part. Integration, training, model monitoring, and compliance review add up fast. Build a full TCO before signing anything.

- Measuring too early. Yield models often don’t show their full impact until the second or third cycle, after the model has been trained on institution-specific data. Set stakeholder expectations accordingly.

- Attribution to a single tool. Students interact with multiple touchpoints — email, counselor calls, campus visits, chatbot, yield nudges. Use multi-touch attribution frameworks in your CRM rather than crediting everything to the last AI interaction.

- Skipping the baseline. If you didn’t document your pre-AI funnel metrics, you’re measuring ROI against a number you made up. This is the most common and most avoidable mistake.

ROI measurement checklist

Before you launch:

- Baseline funnel metrics documented by cohort and segment

- Exposure tracking configured in CRM

- Control/holdout group defined

- Full TCO calculated (licenses, integration, training, compliance)

- FERPA and data use agreements in place

- Success thresholds defined and agreed on by stakeholders

During deployment:

- Monthly leading-indicator reviews in place

- Model version and prompt changes logged

- Bias audit scheduled for mid-cycle

At cycle end:

- Incremental outcomes calculated vs. control

- Revenue uplift and cost savings quantified

- NPV, ROI, and payback period presented to finance and cabinet

- Model performance reviewed; retraining plan in place

Wrapping up

Measuring ROI for AI initiatives in enrollment isn’t optional — it’s how you protect the investment, earn continued support from leadership, and make smarter decisions about what to scale and what to cut. The institutions that get this right aren’t the ones with the most sophisticated AI. They’re the ones with the most disciplined measurement practice.

About LeadSquared

LeadSquared Higher Education CRM is designed to support the measurement and management practices described in this guide — from lead scoring and yield prediction to automated nudges and funnel analytics — all in one place. It brings AI capabilities directly into your enrollment workflow.

Instead of stitching together data from five different systems, your team gets a single source of truth for tracking AI-driven outcomes, attributing impact, and reporting ROI to leadership.

See how LeadSquared can help your institution measure, manage, and maximize the return on every AI initiative in your enrollment funnel.

FAQs

How do you measure ROI of AI in enrollment?

To measure ROI of AI, start by documenting your baseline funnel metrics before deployment — application completion rates, yield, melt, cost per enrollment — then track outcomes for AI-exposed students against a control group. Once you have the data, plug it into the formula: ROI = Net Benefit / Total Cost, where net benefit includes revenue uplift from incremental enrollments, staff time saved, and risk reduction.

How long does it take to see ROI from AI in enrollment?

For operational savings (call deflection, advisor time), you may see returns within one cycle. For yield and retention improvements, plan for two to three cycles before drawing firm conclusions — models improve as they accumulate institution-specific data.

What is a key challenge for companies scaling AI in enrollment?

One of the biggest challenges when scaling AI is attribution — knowing with confidence that your AI drove a specific outcome rather than other factors like a competitor closing or favorable demographics. Without a proper control group or holdout methodology, you’re essentially measuring correlation and calling it causation. This erodes trust with finance and other stakeholders, making it harder to secure budget for future AI investments. Setting up clean experiment design from the start is what separates institutions that can scale AI confidently from those that stall after the pilot.

How do we measure ROI for AI that improves staff productivity rather than directly driving enrollments?

Calculate hours recovered per staff member, multiply by fully-loaded hourly cost, and translate that into either cost savings or redeployed capacity. If an advisor saves 5 hours per week on routine tasks (something that platforms like LeadSquared enable them to do) and redirects that time to high-touch recruitment, estimate the downstream enrollment impact of that additional counseling.

How do we account for AI investments that span multiple budget cycles?

Use a multi-year NPV model. Map out projected costs and benefits by year (or by term), discount each year’s cash flow back to present value, and sum them up. This gives you a single number that accounts for the time value of money and makes comparisons with other capital investments straightforward.